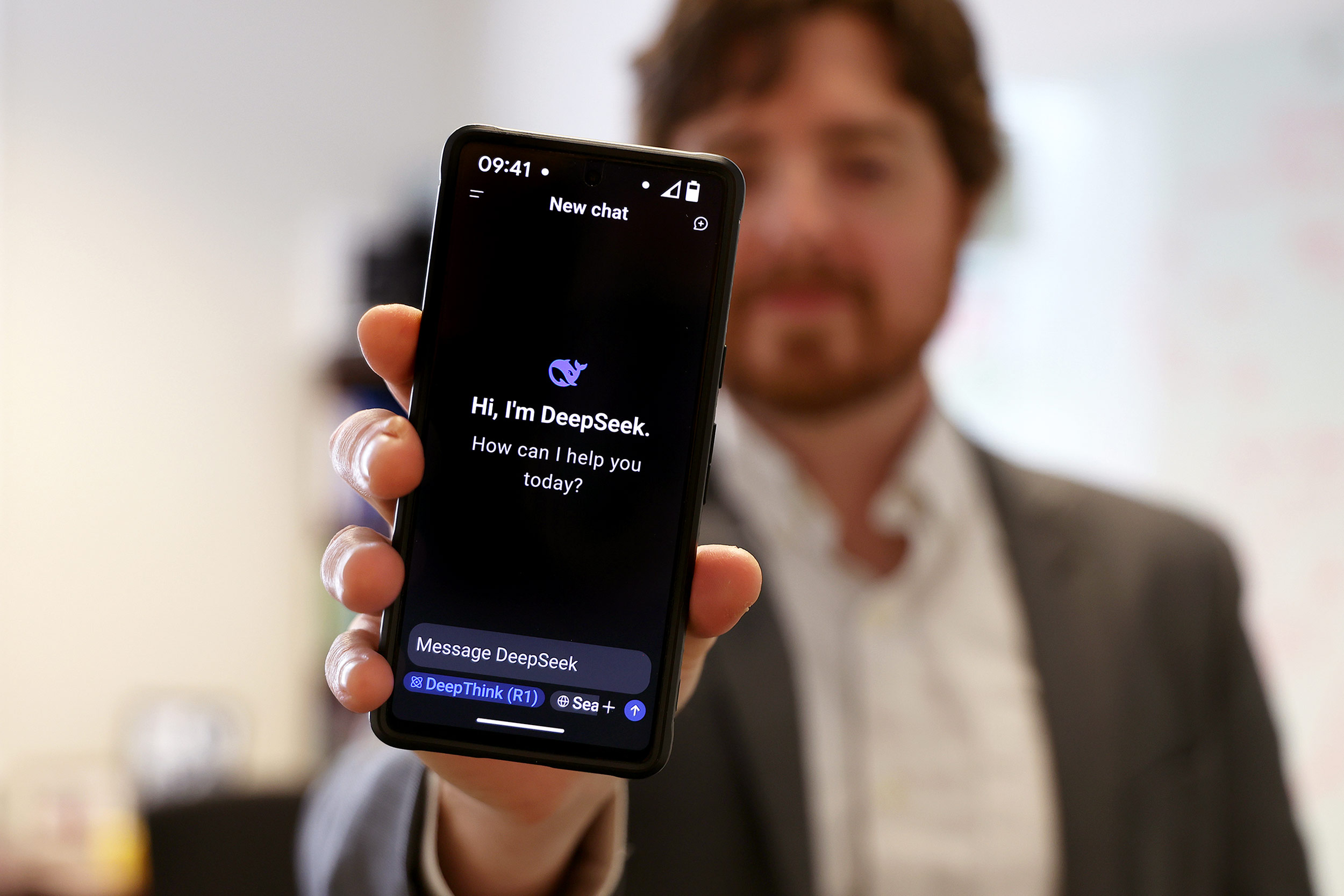

Greatest Deepseek Android/iPhone Apps

페이지 정보

작성자 Janette 댓글 0건 조회 12회 작성일 25-02-01 19:14본문

Unsurprisingly, DeepSeek does abide by China’s censorship legal guidelines, which suggests its chatbot won't offer you any information concerning the Tiananmen Square massacre, amongst different censored subjects. Which means we’re half technique to my subsequent ‘The sky is… POSTSUPERSCRIPT to 64. We substitute all FFNs aside from the first three layers with MoE layers. POSTSUPERSCRIPT in 4.3T tokens, following a cosine decay curve. The gradient clipping norm is about to 1.0. We employ a batch dimension scheduling strategy, the place the batch size is progressively elevated from 3072 to 15360 within the training of the first 469B tokens, after which retains 15360 in the remaining training. 1) Compared with DeepSeek-V2-Base, as a result of enhancements in our mannequin structure, the scale-up of the mannequin dimension and coaching tokens, and the enhancement of data quality, DeepSeek-V3-Base achieves significantly better efficiency as expected. Overall, deepseek ai-V3-Base comprehensively outperforms DeepSeek-V2-Base and Qwen2.5 72B Base, and surpasses LLaMA-3.1 405B Base in the majority of benchmarks, essentially changing into the strongest open-supply model. Under our training framework and infrastructures, training DeepSeek-V3 on every trillion tokens requires solely 180K H800 GPU hours, which is much cheaper than training 72B or 405B dense fashions. Note that due to the adjustments in our evaluation framework over the previous months, the performance of DeepSeek-V2-Base exhibits a slight distinction from our beforehand reported results.

After releasing DeepSeek-V2 in May 2024, which provided sturdy efficiency for a low value, DeepSeek turned recognized because the catalyst for China's A.I. We adopt the same strategy to DeepSeek-V2 (DeepSeek-AI, 2024c) to allow lengthy context capabilities in DeepSeek-V3. Following our earlier work (DeepSeek-AI, 2024b, c), we adopt perplexity-primarily based evaluation for datasets together with HellaSwag, PIQA, WinoGrande, RACE-Middle, RACE-High, MMLU, MMLU-Redux, MMLU-Pro, MMMLU, ARC-Easy, ARC-Challenge, C-Eval, CMMLU, C3, and CCPM, and undertake technology-based evaluation for TriviaQA, NaturalQuestions, DROP, MATH, GSM8K, MGSM, HumanEval, MBPP, LiveCodeBench-Base, CRUXEval, BBH, AGIEval, CLUEWSC, CMRC, and CMath. This is a big deal as a result of it says that if you need to regulate AI techniques you need to not only control the basic assets (e.g, compute, electricity), but in addition the platforms the systems are being served on (e.g., proprietary web sites) so that you just don’t leak the really invaluable stuff - samples including chains of thought from reasoning fashions. We aspire to see future vendors creating hardware that offloads these communication tasks from the valuable computation unit SM, serving as a GPU co-processor or a network co-processor like NVIDIA SHARP Graham et al. With this unified interface, computation items can easily accomplish operations such as read, write, multicast, and scale back across the whole IB-NVLink-unified area through submitting communication requests primarily based on easy primitives.

For non-reasoning data, reminiscent of inventive writing, function-play, and simple question answering, we make the most of DeepSeek-V2.5 to generate responses and enlist human annotators to confirm the accuracy and correctness of the information. We incorporate prompts from diverse domains, comparable to coding, math, writing, function-taking part in, and question answering, throughout the RL course of. Rewards play a pivotal position in RL, steering the optimization process. "Roads, bridges, and intersections are all designed for creatures that course of at 10 bits/s. Unlike different quantum expertise subcategories, the potential defense functions of quantum sensors are comparatively clear and achievable in the near to mid-term. Secondly, though our deployment strategy for DeepSeek-V3 has achieved an end-to-end era pace of more than two occasions that of DeepSeek-V2, there still stays potential for additional enhancement. Since the discharge of ChatGPT in November 2023, American AI companies have been laser-centered on building larger, more highly effective, more expansive, extra power, and useful resource-intensive massive language models. The very best is but to come back: "While INTELLECT-1 demonstrates encouraging benchmark outcomes and represents the primary mannequin of its measurement successfully trained on a decentralized community of GPUs, it nonetheless lags behind current state-of-the-art fashions educated on an order of magnitude extra tokens," they write.

POSTSUPERSCRIPT throughout the primary 2K steps. POSTSUPERSCRIPT. During training, each single sequence is packed from a number of samples. • Forwarding knowledge between the IB (InfiniBand) and NVLink area while aggregating IB traffic destined for a number of GPUs inside the same node from a single GPU. 0.0001, simply to avoid excessive imbalance inside any single sequence. A typical use case in Developer Tools is to autocomplete based on context. OpenAI recently rolled out its Operator agent, which can successfully use a computer on your behalf - in the event you pay $200 for the professional subscription. Conversely, OpenAI CEO Sam Altman welcomed DeepSeek to the AI race, stating "r1 is a powerful model, particularly around what they’re in a position to ship for the price," in a recent submit on X. "We will obviously deliver a lot better fashions and likewise it’s legit invigorating to have a new competitor! Conversely, for questions and not using a definitive floor-truth, equivalent to these involving inventive writing, the reward model is tasked with offering feedback primarily based on the query and the corresponding answer as inputs.

POSTSUPERSCRIPT throughout the primary 2K steps. POSTSUPERSCRIPT. During training, each single sequence is packed from a number of samples. • Forwarding knowledge between the IB (InfiniBand) and NVLink area while aggregating IB traffic destined for a number of GPUs inside the same node from a single GPU. 0.0001, simply to avoid excessive imbalance inside any single sequence. A typical use case in Developer Tools is to autocomplete based on context. OpenAI recently rolled out its Operator agent, which can successfully use a computer on your behalf - in the event you pay $200 for the professional subscription. Conversely, OpenAI CEO Sam Altman welcomed DeepSeek to the AI race, stating "r1 is a powerful model, particularly around what they’re in a position to ship for the price," in a recent submit on X. "We will obviously deliver a lot better fashions and likewise it’s legit invigorating to have a new competitor! Conversely, for questions and not using a definitive floor-truth, equivalent to these involving inventive writing, the reward model is tasked with offering feedback primarily based on the query and the corresponding answer as inputs.