The Success of the Company's A.I

페이지 정보

작성자 Esperanza 댓글 0건 조회 19회 작성일 25-02-01 18:12본문

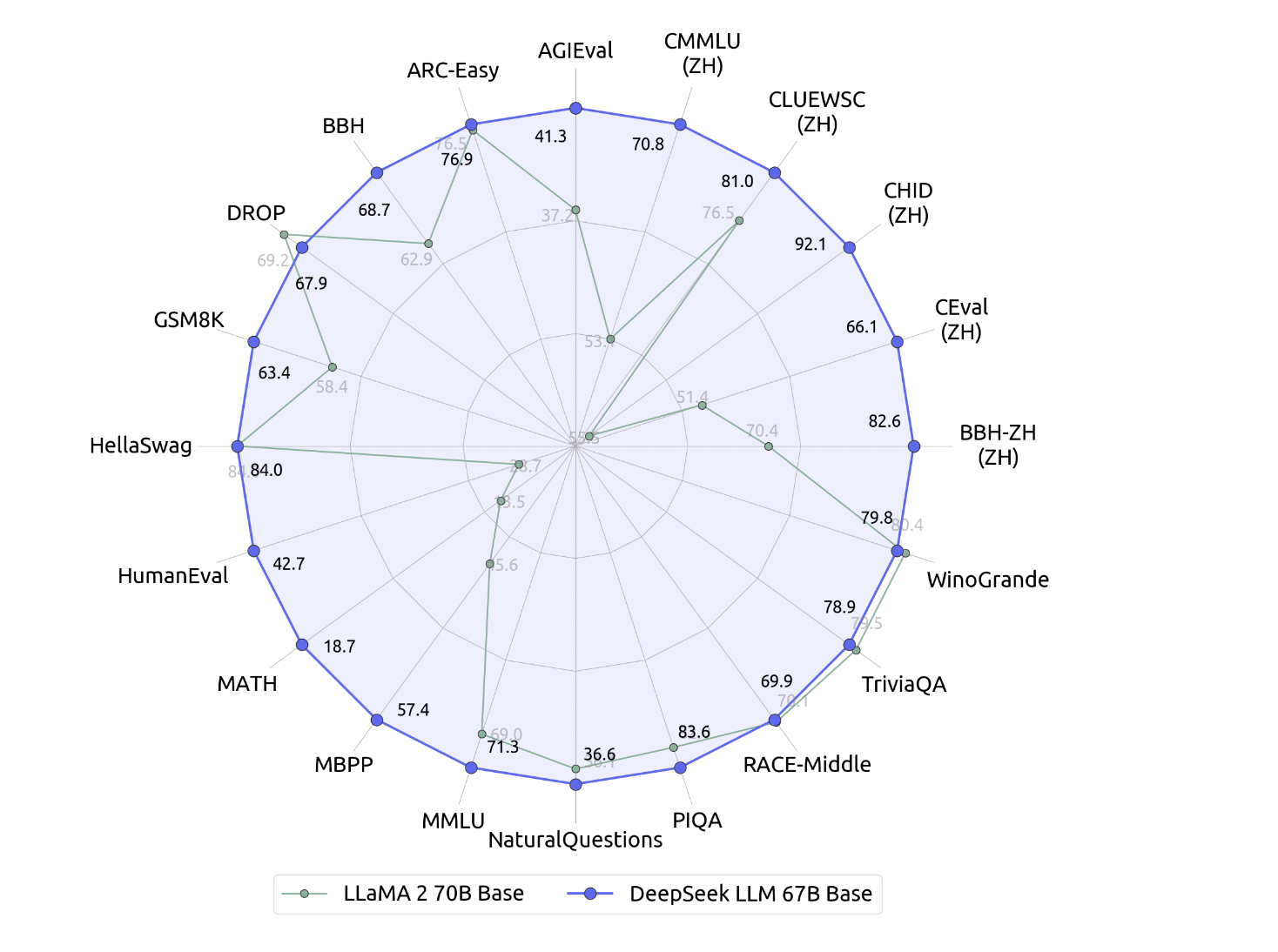

After inflicting shockwaves with an AI mannequin with capabilities rivalling the creations of Google and OpenAI, China’s DeepSeek is facing questions about whether its bold claims stand up to scrutiny. Unsurprisingly, DeepSeek didn't provide solutions to questions about sure political occasions. The reward model produced reward signals for both questions with goal however free-type answers, and questions with out goal answers (such as artistic writing). "It’s plausible to me that they will prepare a model with $6m," Domingos added. After knowledge preparation, you can use the sample shell script to finetune deepseek-ai/deepseek-coder-6.7b-instruct. This is a non-stream instance, you can set the stream parameter to true to get stream response. DeepSeek-V3 makes use of significantly fewer resources in comparison with its peers; for example, whereas the world's leading A.I. DeepSeek-V3 collection (including Base and Chat) helps industrial use. 16,000 graphics processing units (GPUs), if no more, DeepSeek claims to have wanted only about 2,000 GPUs, specifically the H800 collection chip from Nvidia.

Ollama is a free, open-source device that enables customers to run Natural Language Processing fashions domestically. It gives each offline pipeline processing and on-line deployment capabilities, seamlessly integrating with PyTorch-based mostly workflows. DeepSeek provides a variety of solutions tailor-made to our clients’ actual goals. DeepSeek claimed that it exceeded efficiency of OpenAI o1 on benchmarks corresponding to American Invitational Mathematics Examination (AIME) and MATH. For coding capabilities, DeepSeek Coder achieves state-of-the-artwork performance among open-supply code fashions on a number of programming languages and numerous benchmarks. Now we want the Continue VS Code extension. Discuss with the Continue VS Code page for details on how to use the extension. If you're working VS Code on the identical machine as you're hosting ollama, you may try CodeGPT however I couldn't get it to work when ollama is self-hosted on a machine remote to the place I was running VS Code (nicely not without modifying the extension recordsdata). "If they’d spend extra time working on the code and reproduce the DeepSeek thought theirselves it is going to be higher than speaking on the paper," Wang added, utilizing an English translation of a Chinese idiom about people who interact in idle discuss.

Ollama is a free, open-source device that enables customers to run Natural Language Processing fashions domestically. It gives each offline pipeline processing and on-line deployment capabilities, seamlessly integrating with PyTorch-based mostly workflows. DeepSeek provides a variety of solutions tailor-made to our clients’ actual goals. DeepSeek claimed that it exceeded efficiency of OpenAI o1 on benchmarks corresponding to American Invitational Mathematics Examination (AIME) and MATH. For coding capabilities, DeepSeek Coder achieves state-of-the-artwork performance among open-supply code fashions on a number of programming languages and numerous benchmarks. Now we want the Continue VS Code extension. Discuss with the Continue VS Code page for details on how to use the extension. If you're working VS Code on the identical machine as you're hosting ollama, you may try CodeGPT however I couldn't get it to work when ollama is self-hosted on a machine remote to the place I was running VS Code (nicely not without modifying the extension recordsdata). "If they’d spend extra time working on the code and reproduce the DeepSeek thought theirselves it is going to be higher than speaking on the paper," Wang added, utilizing an English translation of a Chinese idiom about people who interact in idle discuss.

The tech-heavy Nasdaq one hundred rose 1.Fifty nine % after dropping more than three % the earlier day. They lowered communication by rearranging (each 10 minutes) the precise machine every expert was on as a way to keep away from certain machines being queried extra typically than the others, adding auxiliary load-balancing losses to the coaching loss operate, and other load-balancing strategies. Even before Generative AI period, machine learning had already made important strides in improving developer productivity. True, I´m responsible of mixing actual LLMs with switch studying. Investigating the system's switch learning capabilities might be an fascinating area of future research. Dependence on Proof Assistant: The system's efficiency is heavily dependent on the capabilities of the proof assistant it is integrated with. If the proof assistant has limitations or biases, this might impact the system's skill to study successfully. When asked the following questions, the AI assistant responded: "Sorry, that’s past my current scope.

The consumer asks a query, and the Assistant solves it. By 27 January 2025 the app had surpassed ChatGPT as the very best-rated free deepseek app on the iOS App Store in the United States; its chatbot reportedly answers questions, solves logic problems and writes laptop packages on par with different chatbots available on the market, in accordance with benchmark checks utilized by American A.I. Assistant, which uses the V3 model as a chatbot app for Apple IOS and Android. However, The Wall Street Journal acknowledged when it used 15 issues from the 2024 edition of AIME, the o1 mannequin reached a solution sooner than DeepSeek-R1-Lite-Preview. The Wall Street Journal. The company also released some "DeepSeek-R1-Distill" models, which aren't initialized on V3-Base, however instead are initialized from different pretrained open-weight models, together with LLaMA and Qwen, then positive-tuned on artificial knowledge generated by R1. We release the DeepSeek-Prover-V1.5 with 7B parameters, together with base, SFT and RL fashions, to the public.

The consumer asks a query, and the Assistant solves it. By 27 January 2025 the app had surpassed ChatGPT as the very best-rated free deepseek app on the iOS App Store in the United States; its chatbot reportedly answers questions, solves logic problems and writes laptop packages on par with different chatbots available on the market, in accordance with benchmark checks utilized by American A.I. Assistant, which uses the V3 model as a chatbot app for Apple IOS and Android. However, The Wall Street Journal acknowledged when it used 15 issues from the 2024 edition of AIME, the o1 mannequin reached a solution sooner than DeepSeek-R1-Lite-Preview. The Wall Street Journal. The company also released some "DeepSeek-R1-Distill" models, which aren't initialized on V3-Base, however instead are initialized from different pretrained open-weight models, together with LLaMA and Qwen, then positive-tuned on artificial knowledge generated by R1. We release the DeepSeek-Prover-V1.5 with 7B parameters, together with base, SFT and RL fashions, to the public.

If you have any type of concerns regarding where and the best ways to use ديب سيك, you could call us at the web site.