Dont Be Fooled By Deepseek

페이지 정보

작성자 Demetria 댓글 0건 조회 17회 작성일 25-02-01 11:04본문

However, DeepSeek is currently fully free deepseek to use as a chatbot on mobile and on the net, and that's a great advantage for it to have. But beneath all of this I've a sense of lurking horror - AI methods have received so useful that the thing that may set people other than each other is not specific hard-won expertise for utilizing AI techniques, but slightly just having a high degree of curiosity and agency. These bills have received significant pushback with critics saying this may symbolize an unprecedented level of authorities surveillance on individuals, and would contain residents being handled as ‘guilty until proven innocent’ rather than ‘innocent till confirmed guilty’. There has been recent motion by American legislators in the direction of closing perceived gaps in AIS - most notably, numerous bills search to mandate AIS compliance on a per-system basis as well as per-account, where the power to entry units capable of working or coaching AI techniques would require an AIS account to be associated with the gadget. Additional controversies centered on the perceived regulatory capture of AIS - although most of the massive-scale AI providers protested it in public, varied commentators famous that the AIS would place a significant cost burden on anyone wishing to supply AI companies, thus enshrining various existing companies.

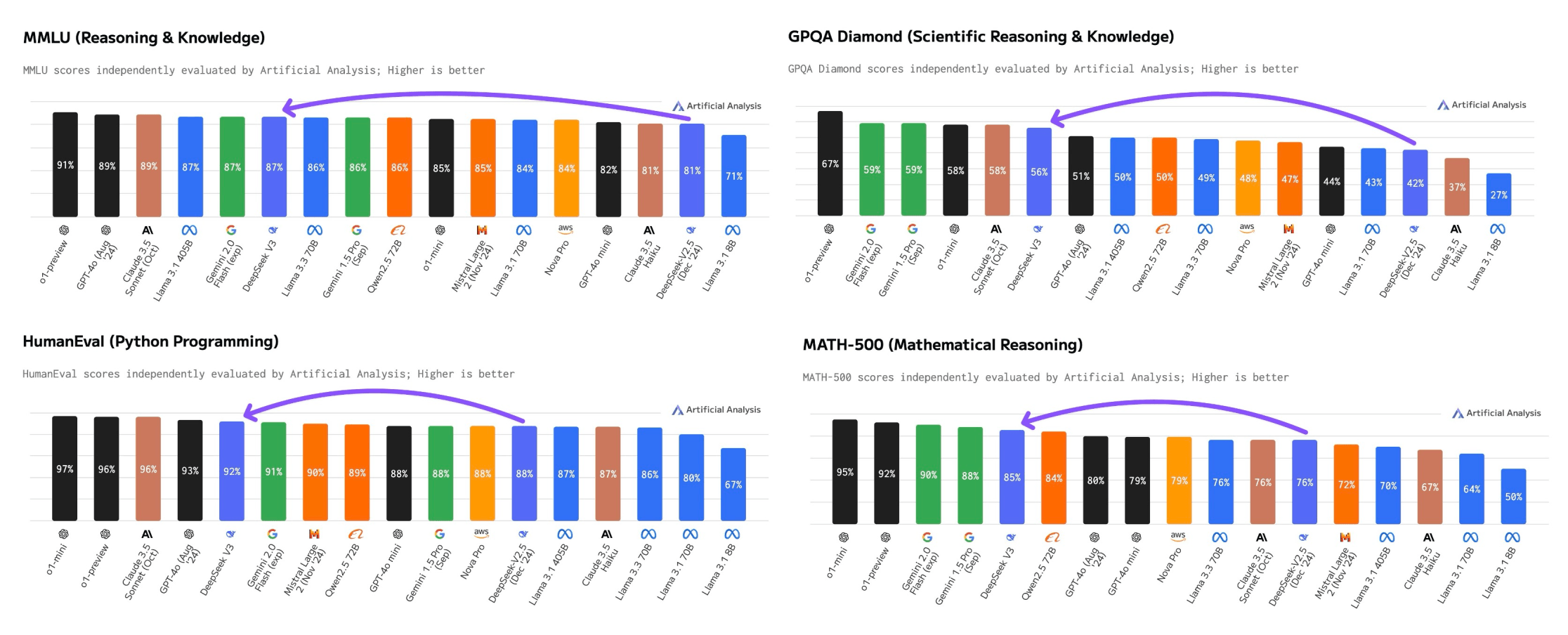

They offer native Code Interpreter SDKs for Python and Javascript/Typescript. DeepSeek-Coder-V2, an open-supply Mixture-of-Experts (MoE) code language mannequin that achieves performance comparable to GPT4-Turbo in code-specific duties. AutoRT can be used both to collect information for tasks as well as to carry out duties themselves. R1 is critical because it broadly matches OpenAI’s o1 mannequin on a spread of reasoning tasks and challenges the notion that Western AI corporations hold a significant lead over Chinese ones. In other words, you are taking a bunch of robots (here, some comparatively easy Google bots with a manipulator arm and eyes and mobility) and give them entry to an enormous model. This is all simpler than you would possibly count on: The main thing that strikes me right here, for those who read the paper closely, is that none of that is that difficult. But perhaps most significantly, buried in the paper is a crucial insight: you can convert pretty much any LLM into a reasoning mannequin if you happen to finetune them on the correct combine of information - here, 800k samples exhibiting questions and solutions the chains of thought written by the model while answering them. Why this matters - a whole lot of notions of control in AI coverage get more durable if you happen to want fewer than one million samples to transform any model into a ‘thinker’: Probably the most underhyped part of this release is the demonstration that you could take fashions not educated in any sort of main RL paradigm (e.g, Llama-70b) and convert them into highly effective reasoning models utilizing just 800k samples from a powerful reasoner.

They offer native Code Interpreter SDKs for Python and Javascript/Typescript. DeepSeek-Coder-V2, an open-supply Mixture-of-Experts (MoE) code language mannequin that achieves performance comparable to GPT4-Turbo in code-specific duties. AutoRT can be used both to collect information for tasks as well as to carry out duties themselves. R1 is critical because it broadly matches OpenAI’s o1 mannequin on a spread of reasoning tasks and challenges the notion that Western AI corporations hold a significant lead over Chinese ones. In other words, you are taking a bunch of robots (here, some comparatively easy Google bots with a manipulator arm and eyes and mobility) and give them entry to an enormous model. This is all simpler than you would possibly count on: The main thing that strikes me right here, for those who read the paper closely, is that none of that is that difficult. But perhaps most significantly, buried in the paper is a crucial insight: you can convert pretty much any LLM into a reasoning mannequin if you happen to finetune them on the correct combine of information - here, 800k samples exhibiting questions and solutions the chains of thought written by the model while answering them. Why this matters - a whole lot of notions of control in AI coverage get more durable if you happen to want fewer than one million samples to transform any model into a ‘thinker’: Probably the most underhyped part of this release is the demonstration that you could take fashions not educated in any sort of main RL paradigm (e.g, Llama-70b) and convert them into highly effective reasoning models utilizing just 800k samples from a powerful reasoner.

Get started with Mem0 utilizing pip. Things bought somewhat easier with the arrival of generative fashions, however to get the most effective performance out of them you usually had to construct very complicated prompts and in addition plug the system into a bigger machine to get it to do actually useful things. Testing: Google examined out the system over the course of 7 months throughout 4 workplace buildings and with a fleet of at occasions 20 concurrently controlled robots - this yielded "a collection of 77,000 actual-world robotic trials with both teleoperation and autonomous execution". Why this issues - rushing up the AI manufacturing perform with a giant model: AutoRT exhibits how we are able to take the dividends of a fast-shifting part of AI (generative fashions) and use these to speed up improvement of a comparatively slower moving part of AI (sensible robots). "The kind of information collected by AutoRT tends to be highly diverse, leading to fewer samples per job and lots of selection in scenes and object configurations," Google writes. Just faucet the Search button (or click on it in case you are utilizing the web version) after which whatever prompt you type in becomes an online search.

So I began digging into self-hosting AI fashions and rapidly came upon that Ollama might help with that, I additionally regarded through varied other ways to begin utilizing the huge amount of models on Huggingface but all roads led to Rome. Then he sat down and took out a pad of paper and let his hand sketch methods for The final Game as he appeared into area, ready for the family machines to deliver him his breakfast and his espresso. The paper presents a new benchmark called CodeUpdateArena to test how effectively LLMs can replace their information to handle changes in code APIs. It is a Plain English Papers abstract of a analysis paper known as DeepSeekMath: Pushing the limits of Mathematical Reasoning in Open Language Models. In new analysis from Tufts University, Northeastern University, Cornell University, and Berkeley the researchers exhibit this again, displaying that a regular LLM (Llama-3-1-Instruct, 8b) is able to performing "protein engineering by Pareto and experiment-price range constrained optimization, demonstrating success on both synthetic and experimental fitness landscapes". And I will do it again, and again, in every mission I work on nonetheless utilizing react-scripts. Personal anecdote time : After i first discovered of Vite in a earlier job, I took half a day to convert a mission that was using react-scripts into Vite.

For those who have virtually any concerns concerning wherever and also the best way to make use of ديب سيك, you are able to email us from our web-site.