What It's Worthwhile to Learn About Deepseek And Why

페이지 정보

작성자 Audrey 댓글 0건 조회 16회 작성일 25-02-01 10:11본문

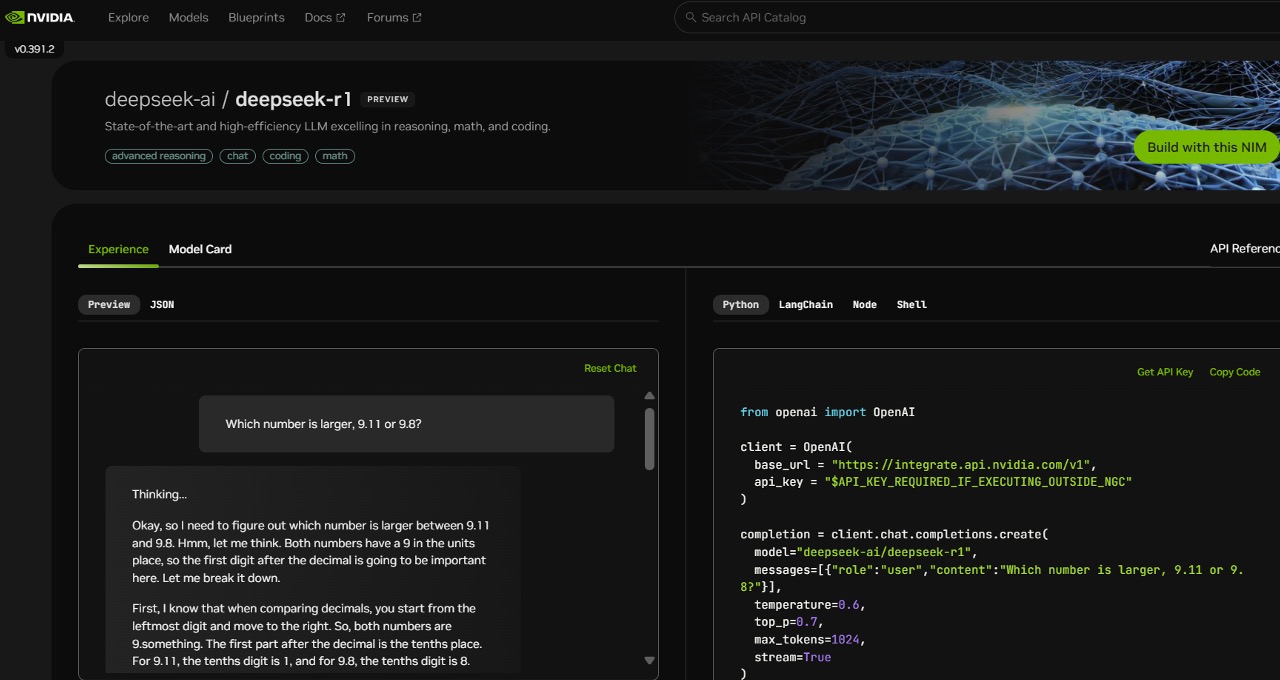

Now to another DeepSeek big, DeepSeek-Coder-V2! Training information: Compared to the original DeepSeek-Coder, deepseek ai-Coder-V2 expanded the training knowledge significantly by including an extra 6 trillion tokens, rising the overall to 10.2 trillion tokens. At the small scale, we train a baseline MoE mannequin comprising 15.7B complete parameters on 1.33T tokens. The total compute used for the DeepSeek V3 mannequin for pretraining experiments would doubtless be 2-4 instances the reported quantity in the paper. This makes the mannequin quicker and more environment friendly. Reinforcement Learning: The mannequin makes use of a more subtle reinforcement learning method, together with Group Relative Policy Optimization (GRPO), which makes use of feedback from compilers and check circumstances, and a realized reward model to fantastic-tune the Coder. For instance, when you've got a piece of code with one thing missing in the middle, the model can predict what ought to be there based mostly on the encircling code. We have explored DeepSeek’s method to the development of superior models. The larger mannequin is extra powerful, and its structure is based on DeepSeek's MoE method with 21 billion "energetic" parameters.

On 20 November 2024, DeepSeek-R1-Lite-Preview grew to become accessible through DeepSeek's API, in addition to by way of a chat interface after logging in. We’ve seen enhancements in overall user satisfaction with Claude 3.5 Sonnet across these customers, so in this month’s Sourcegraph release we’re making it the default model for chat and prompts. Model measurement and structure: The DeepSeek-Coder-V2 model comes in two fundamental sizes: a smaller model with sixteen B parameters and a larger one with 236 B parameters. And that implication has trigger a massive stock selloff of Nvidia leading to a 17% loss in inventory worth for the company- $600 billion dollars in value decrease for that one firm in a single day (Monday, Jan 27). That’s the biggest single day dollar-worth loss for any firm in U.S. DeepSeek, one of the vital sophisticated AI startups in China, has published particulars on the infrastructure it uses to prepare its fashions. DeepSeek-Coder-V2 uses the identical pipeline as DeepSeekMath. In code modifying skill DeepSeek-Coder-V2 0724 will get 72,9% score which is the same as the newest GPT-4o and higher than some other fashions aside from the Claude-3.5-Sonnet with 77,4% score.

7b-2: This model takes the steps and schema definition, translating them into corresponding SQL code. 2. Initializing AI Models: It creates situations of two AI fashions: - @hf/thebloke/deepseek-coder-6.7b-base-awq: This mannequin understands natural language directions and generates the steps in human-readable format. Excels in each English and Chinese language tasks, in code era and mathematical reasoning. The second mannequin receives the generated steps and the schema definition, combining the data for SQL era. Compared with DeepSeek 67B, DeepSeek-V2 achieves stronger performance, and meanwhile saves 42.5% of training prices, reduces the KV cache by 93.3%, and boosts the maximum era throughput to 5.76 times. Training requires important computational sources because of the vast dataset. No proprietary information or coaching tips had been utilized: Mistral 7B - Instruct mannequin is a simple and preliminary demonstration that the bottom mannequin can easily be superb-tuned to attain good efficiency. Like o1, R1 is a "reasoning" mannequin. In an interview earlier this 12 months, Wenfeng characterized closed-supply AI like OpenAI’s as a "temporary" moat. Their preliminary try to beat the benchmarks led them to create models that had been quite mundane, similar to many others.

7b-2: This model takes the steps and schema definition, translating them into corresponding SQL code. 2. Initializing AI Models: It creates situations of two AI fashions: - @hf/thebloke/deepseek-coder-6.7b-base-awq: This mannequin understands natural language directions and generates the steps in human-readable format. Excels in each English and Chinese language tasks, in code era and mathematical reasoning. The second mannequin receives the generated steps and the schema definition, combining the data for SQL era. Compared with DeepSeek 67B, DeepSeek-V2 achieves stronger performance, and meanwhile saves 42.5% of training prices, reduces the KV cache by 93.3%, and boosts the maximum era throughput to 5.76 times. Training requires important computational sources because of the vast dataset. No proprietary information or coaching tips had been utilized: Mistral 7B - Instruct mannequin is a simple and preliminary demonstration that the bottom mannequin can easily be superb-tuned to attain good efficiency. Like o1, R1 is a "reasoning" mannequin. In an interview earlier this 12 months, Wenfeng characterized closed-supply AI like OpenAI’s as a "temporary" moat. Their preliminary try to beat the benchmarks led them to create models that had been quite mundane, similar to many others.

What's behind DeepSeek-Coder-V2, making it so special to beat GPT4-Turbo, Claude-3-Opus, Gemini-1.5-Pro, Llama-3-70B and Codestral in coding and math? The performance of DeepSeek-Coder-V2 on math and code benchmarks. It’s skilled on 60% supply code, 10% math corpus, and 30% natural language. This is achieved by leveraging Cloudflare's AI fashions to understand and generate natural language directions, that are then converted into SQL commands. The USVbased Embedded Obstacle Segmentation problem goals to address this limitation by encouraging growth of innovative options and optimization of established semantic segmentation architectures which are environment friendly on embedded hardware… This is a submission for the Cloudflare AI Challenge. Understanding Cloudflare Workers: I started by researching how to use Cloudflare Workers and Hono for serverless applications. I built a serverless utility using Cloudflare Workers and Hono, a lightweight web framework for Cloudflare Workers. Building this application concerned several steps, from understanding the necessities to implementing the solution. The appliance is designed to generate steps for inserting random data right into a PostgreSQL database and then convert these steps into SQL queries. Italy’s knowledge protection agency has blocked the Chinese AI chatbot DeekSeek after its builders failed to disclose how it collects person information or whether it's stored on Chinese servers.

If you liked this short article and you would such as to obtain additional facts pertaining to ديب سيك kindly browse through the web page.