Deepseek Features

페이지 정보

작성자 Alyce Gorsuch 댓글 0건 조회 10회 작성일 25-02-01 06:29본문

The deepseek [click through the following post] v3 paper (and are out, after yesterday's mysterious launch of Loads of fascinating details in here. The regulation dictates that generative AI companies should "uphold core socialist values" and prohibits content that "subverts state authority" and "threatens or compromises national security and interests"; it additionally compels AI builders to endure security evaluations and register their algorithms with the CAC earlier than public release. In China, however, alignment training has become a robust software for the Chinese authorities to restrict the chatbots: to go the CAC registration, Chinese builders should fantastic tune their models to align with "core socialist values" and Beijing’s standard of political correctness. While the Chinese government maintains that the PRC implements the socialist "rule of law," Western students have generally criticized the PRC as a country with "rule by law" due to the lack of judiciary independence. They characterize the pursuits of the nation and the nation, and are symbols of the nation and the nation. These options are more and more important in the context of training large frontier AI fashions. Unlike traditional online content such as social media posts or search engine outcomes, textual content generated by large language fashions is unpredictable. It each narrowly targets problematic finish uses whereas containing broad clauses that might sweep in multiple advanced Chinese client AI models.

The deepseek [click through the following post] v3 paper (and are out, after yesterday's mysterious launch of Loads of fascinating details in here. The regulation dictates that generative AI companies should "uphold core socialist values" and prohibits content that "subverts state authority" and "threatens or compromises national security and interests"; it additionally compels AI builders to endure security evaluations and register their algorithms with the CAC earlier than public release. In China, however, alignment training has become a robust software for the Chinese authorities to restrict the chatbots: to go the CAC registration, Chinese builders should fantastic tune their models to align with "core socialist values" and Beijing’s standard of political correctness. While the Chinese government maintains that the PRC implements the socialist "rule of law," Western students have generally criticized the PRC as a country with "rule by law" due to the lack of judiciary independence. They characterize the pursuits of the nation and the nation, and are symbols of the nation and the nation. These options are more and more important in the context of training large frontier AI fashions. Unlike traditional online content such as social media posts or search engine outcomes, textual content generated by large language fashions is unpredictable. It each narrowly targets problematic finish uses whereas containing broad clauses that might sweep in multiple advanced Chinese client AI models.

This find yourself using 3.4375 bpw. The first two classes contain finish use provisions targeting army, intelligence, or mass surveillance applications, with the latter particularly concentrating on the use of quantum applied sciences for encryption breaking and quantum key distribution. Using compute benchmarks, however, especially in the context of nationwide safety risks, is somewhat arbitrary. However, with the slowing of Moore’s Law, which predicted the doubling of transistors every two years, and as transistor scaling (i.e., miniaturization) approaches basic physical limits, this method could yield diminishing returns and may not be enough to maintain a big lead over China in the long run. In accordance with a report by the Institute for Defense Analyses, inside the subsequent 5 years, China may leverage quantum sensors to enhance its counter-stealth, counter-submarine, picture detection, and position, deep seek navigation, and timing capabilities. They'll "chain" together multiple smaller fashions, each educated beneath the compute threshold, to create a system with capabilities comparable to a large frontier mannequin or simply "fine-tune" an existing and freely out there superior open-source mannequin from GitHub. To search out out, we queried 4 Chinese chatbots on political questions and compared their responses on Hugging Face - an open-supply platform where builders can add models that are topic to much less censorship-and their Chinese platforms where CAC censorship applies extra strictly.

This find yourself using 3.4375 bpw. The first two classes contain finish use provisions targeting army, intelligence, or mass surveillance applications, with the latter particularly concentrating on the use of quantum applied sciences for encryption breaking and quantum key distribution. Using compute benchmarks, however, especially in the context of nationwide safety risks, is somewhat arbitrary. However, with the slowing of Moore’s Law, which predicted the doubling of transistors every two years, and as transistor scaling (i.e., miniaturization) approaches basic physical limits, this method could yield diminishing returns and may not be enough to maintain a big lead over China in the long run. In accordance with a report by the Institute for Defense Analyses, inside the subsequent 5 years, China may leverage quantum sensors to enhance its counter-stealth, counter-submarine, picture detection, and position, deep seek navigation, and timing capabilities. They'll "chain" together multiple smaller fashions, each educated beneath the compute threshold, to create a system with capabilities comparable to a large frontier mannequin or simply "fine-tune" an existing and freely out there superior open-source mannequin from GitHub. To search out out, we queried 4 Chinese chatbots on political questions and compared their responses on Hugging Face - an open-supply platform where builders can add models that are topic to much less censorship-and their Chinese platforms where CAC censorship applies extra strictly.

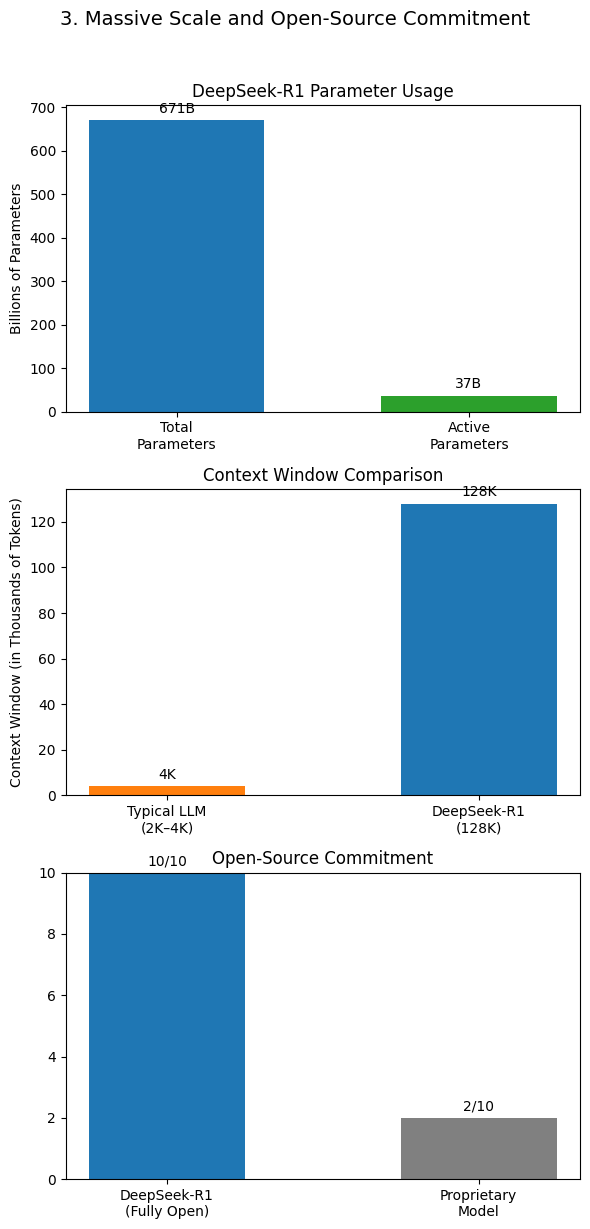

The reason the United States has included common-purpose frontier AI models below the "prohibited" category is likely because they can be "fine-tuned" at low price to carry out malicious or subversive activities, reminiscent of creating autonomous weapons or unknown malware variants. Efficient coaching of massive models calls for high-bandwidth communication, low latency, and fast knowledge transfer between chips for both forward passes (propagating activations) and backward passes (gradient descent). Current large language fashions (LLMs) have greater than 1 trillion parameters, requiring a number of computing operations throughout tens of 1000's of excessive-efficiency chips inside a knowledge center. Censorship regulation and implementation in China’s main fashions have been effective in restricting the vary of possible outputs of the LLMs without suffocating their capability to reply open-ended questions. Creating socially acceptable outputs for generative AI is hard. Abstract:We present DeepSeek-V3, a robust Mixture-of-Experts (MoE) language mannequin with 671B total parameters with 37B activated for each token. We present DeepSeek-V3, a strong Mixture-of-Experts (MoE) language mannequin with 671B whole parameters with 37B activated for each token. Inexplicably, the mannequin named DeepSeek-Coder-V2 Chat in the paper was launched as DeepSeek-Coder-V2-Instruct in HuggingFace. DeepSeek Chat has two variants of 7B and 67B parameters, which are trained on a dataset of two trillion tokens, says the maker.

The DeepSeek V2 Chat and DeepSeek Coder V2 models have been merged and upgraded into the new model, DeepSeek V2.5. Alignment refers to AI firms training their fashions to generate responses that align them with human values. The notifications required under the OISM will call for corporations to supply detailed details about their investments in China, providing a dynamic, high-resolution snapshot of the Chinese investment landscape. The effectiveness of the proposed OISM hinges on plenty of assumptions: (1) that the withdrawal of U.S. Notably, it surpasses free deepseek-V2.5-0905 by a big margin of 20%, highlighting substantial improvements in tackling simple tasks and showcasing the effectiveness of its advancements. Once they’ve completed this they do giant-scale reinforcement studying coaching, which "focuses on enhancing the model’s reasoning capabilities, notably in reasoning-intensive tasks such as coding, mathematics, science, and logic reasoning, which involve well-outlined problems with clear solutions". After training, it was deployed on H800 clusters. • At an economical cost of solely 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the at the moment strongest open-supply base model.