Four Ways You can use Deepseek To Become Irresistible To Customers

페이지 정보

작성자 Imogen 댓글 0건 조회 11회 작성일 25-02-01 04:01본문

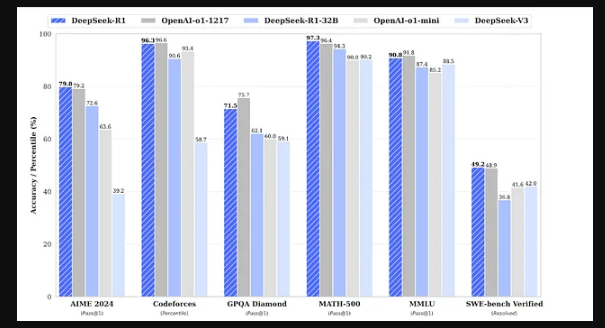

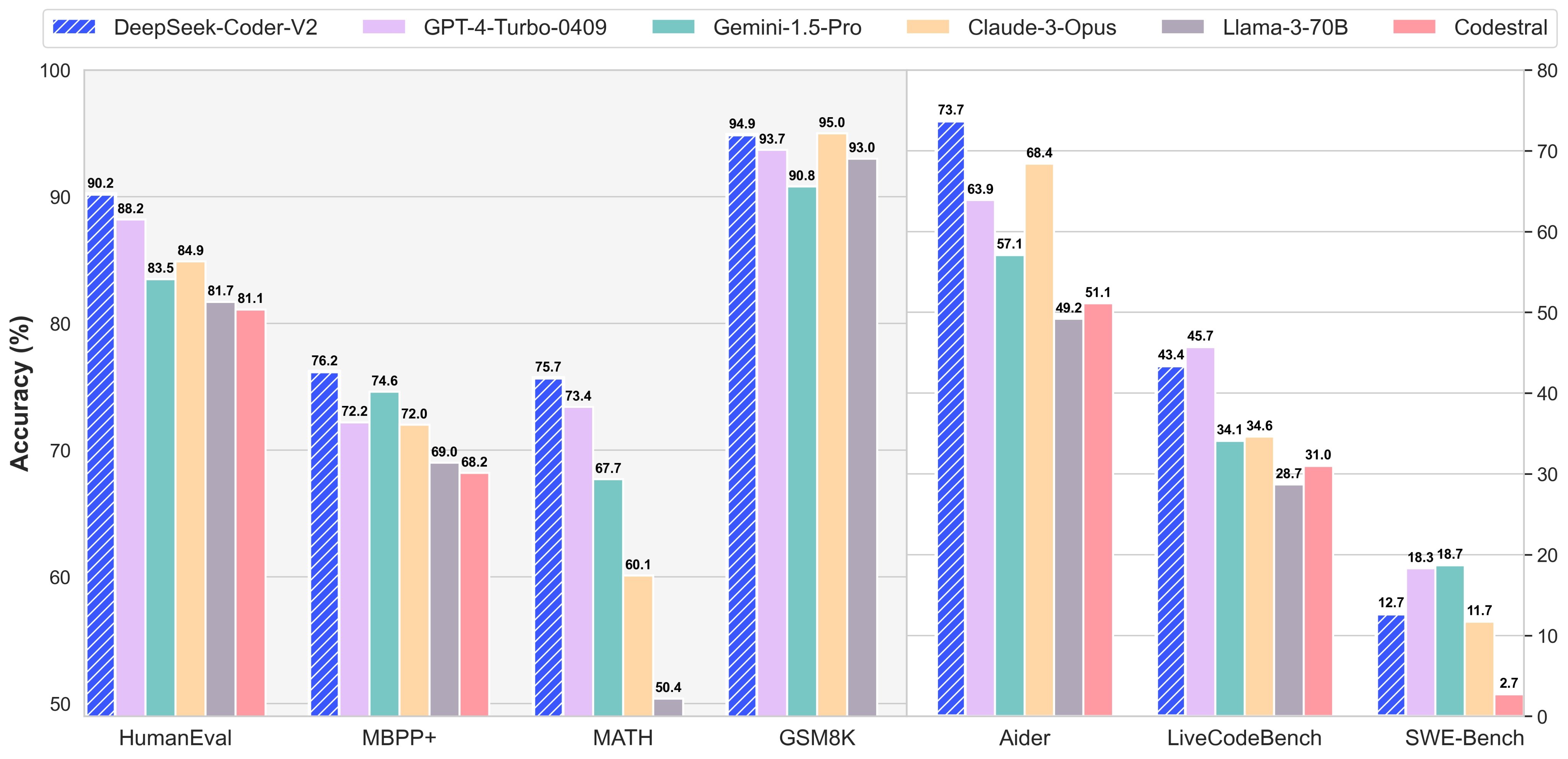

TL;DR: DeepSeek is a superb step in the development of open AI approaches. DeepSeek's founder, Liang Wenfeng has been compared to Open AI CEO Sam Altman, with CNN calling him the Sam Altman of China and an evangelist for A.I. Compared with DeepSeek-V2, we optimize the pre-coaching corpus by enhancing the ratio of mathematical and programming samples, whereas expanding multilingual protection beyond English and Chinese. During the pre-coaching stage, training DeepSeek-V3 on each trillion tokens requires solely 180K H800 GPU hours, i.e., 3.7 days on our cluster with 2048 H800 GPUs. This code requires the rand crate to be put in. Evaluating giant language models educated on code. • Code, Math, and Reasoning: (1) DeepSeek-V3 achieves state-of-the-artwork performance on math-associated benchmarks among all non-long-CoT open-source and closed-source models. 2) For factuality benchmarks, DeepSeek-V3 demonstrates superior efficiency among open-source fashions on each SimpleQA and Chinese SimpleQA. For engineering-associated duties, whereas DeepSeek-V3 performs slightly below Claude-Sonnet-3.5, it still outpaces all other fashions by a big margin, demonstrating its competitiveness across diverse technical benchmarks. Meanwhile, we also maintain management over the output fashion and size of DeepSeek-V3.

During the post-training stage, we distill the reasoning functionality from the DeepSeek-R1 collection of fashions, and in the meantime rigorously maintain the balance between mannequin accuracy and technology size. In the primary stage, the maximum context length is extended to 32K, and within the second stage, it is further prolonged to 128K. Following this, we conduct post-coaching, including Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) on the bottom mannequin of DeepSeek-V3, to align it with human preferences and further unlock its potential. On the other hand, MTP may enable the model to pre-plan its representations for higher prediction of future tokens. Models are pre-trained using 1.8T tokens and a 4K window size on this step. LLama(Large Language Model Meta AI)3, the subsequent era of Llama 2, Trained on 15T tokens (7x greater than Llama 2) by Meta comes in two sizes, the 8b and 70b version. Llama 3.1 405B trained 30,840,000 GPU hours-11x that utilized by DeepSeek v3, for a mannequin that benchmarks barely worse. Code Llama is specialized for code-particular tasks and ديب سيك isn’t applicable as a basis model for different duties.

During the post-training stage, we distill the reasoning functionality from the DeepSeek-R1 collection of fashions, and in the meantime rigorously maintain the balance between mannequin accuracy and technology size. In the primary stage, the maximum context length is extended to 32K, and within the second stage, it is further prolonged to 128K. Following this, we conduct post-coaching, including Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) on the bottom mannequin of DeepSeek-V3, to align it with human preferences and further unlock its potential. On the other hand, MTP may enable the model to pre-plan its representations for higher prediction of future tokens. Models are pre-trained using 1.8T tokens and a 4K window size on this step. LLama(Large Language Model Meta AI)3, the subsequent era of Llama 2, Trained on 15T tokens (7x greater than Llama 2) by Meta comes in two sizes, the 8b and 70b version. Llama 3.1 405B trained 30,840,000 GPU hours-11x that utilized by DeepSeek v3, for a mannequin that benchmarks barely worse. Code Llama is specialized for code-particular tasks and ديب سيك isn’t applicable as a basis model for different duties.

• At an economical price of solely 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the at the moment strongest open-supply base mannequin. The pre-training course of is remarkably stable. Support for Transposed GEMM Operations. Numeric Trait: This trait defines basic operations for numeric types, together with multiplication and a technique to get the value one. The insert methodology iterates over every character within the given word and inserts it into the Trie if it’s not already current. The unwrap() method is used to extract the end result from the Result type, which is returned by the operate. CodeNinja: - Created a function that calculated a product or difference based on a situation. Pattern matching: The filtered variable is created through the use of pattern matching to filter out any unfavorable numbers from the input vector. The mannequin significantly excels at coding and reasoning tasks whereas utilizing significantly fewer assets than comparable fashions. The example was comparatively easy, emphasizing easy arithmetic and branching utilizing a match expression. We've submitted a PR to the popular quantization repository llama.cpp to completely help all HuggingFace pre-tokenizers, together with ours. "GPT-4 finished training late 2022. There have been loads of algorithmic and hardware enhancements since 2022, driving down the cost of coaching a GPT-4 class model.

• At an economical price of solely 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the at the moment strongest open-supply base mannequin. The pre-training course of is remarkably stable. Support for Transposed GEMM Operations. Numeric Trait: This trait defines basic operations for numeric types, together with multiplication and a technique to get the value one. The insert methodology iterates over every character within the given word and inserts it into the Trie if it’s not already current. The unwrap() method is used to extract the end result from the Result type, which is returned by the operate. CodeNinja: - Created a function that calculated a product or difference based on a situation. Pattern matching: The filtered variable is created through the use of pattern matching to filter out any unfavorable numbers from the input vector. The mannequin significantly excels at coding and reasoning tasks whereas utilizing significantly fewer assets than comparable fashions. The example was comparatively easy, emphasizing easy arithmetic and branching utilizing a match expression. We've submitted a PR to the popular quantization repository llama.cpp to completely help all HuggingFace pre-tokenizers, together with ours. "GPT-4 finished training late 2022. There have been loads of algorithmic and hardware enhancements since 2022, driving down the cost of coaching a GPT-4 class model.

The model checkpoints can be found at this https URL. To additional push the boundaries of open-supply mannequin capabilities, we scale up our fashions and introduce deepseek ai china-V3, a large Mixture-of-Experts (MoE) model with 671B parameters, of which 37B are activated for each token. For particulars, please discuss with Reasoning Model。 Notably, it even outperforms o1-preview on specific benchmarks, corresponding to MATH-500, demonstrating its strong mathematical reasoning capabilities. Low-precision training has emerged as a promising answer for efficient coaching (Kalamkar et al., 2019; Narang et al., 2017; Peng et al., 2023b; Dettmers et al., 2022), its evolution being intently tied to developments in hardware capabilities (Micikevicius et al., 2022; Luo et al., 2024; Rouhani et al., 2023a). On this work, we introduce an FP8 combined precision coaching framework and, for the primary time, validate its effectiveness on an especially large-scale model. Reference disambiguation datasets include CLUEWSC (Xu et al., 2020) and WinoGrande Sakaguchi et al.

If you have any inquiries concerning wherever and how to use ديب سيك, you can make contact with us at our web site.