Where Is The most effective Deepseek?

페이지 정보

작성자 Clay 댓글 0건 조회 12회 작성일 25-02-01 03:37본문

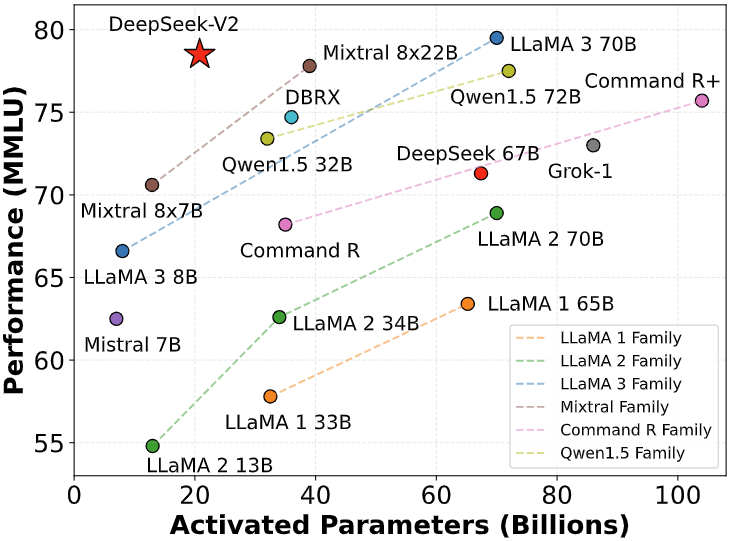

This repo incorporates GPTQ model information for DeepSeek's Deepseek Coder 33B Instruct. Aider permits you to pair program with LLMs to edit code in your local git repository Start a new challenge or work with an current git repo. The recordsdata offered are tested to work with Transformers. Note: If you are a CTO/VP of Engineering, it'd be nice help to buy copilot subs to your workforce. Open-supply Tools like Composeio additional assist orchestrate these AI-driven workflows across completely different programs convey productiveness improvements. I left The Odin Project and ran to Google, then to AI tools like Gemini, ChatGPT, DeepSeek for assist and then to Youtube. The costs are presently excessive, but organizations like DeepSeek are chopping them down by the day. The implications of this are that more and more powerful AI systems combined with properly crafted data technology eventualities could possibly bootstrap themselves past pure knowledge distributions. DeepSeek Coder is a capable coding model trained on two trillion code and natural language tokens. GPT-4o, Claude 3.5 Sonnet, Claude 3 Opus and DeepSeek Coder V2. DeepSeek Coder makes use of the HuggingFace Tokenizer to implement the Bytelevel-BPE algorithm, with specially designed pre-tokenizers to make sure optimal efficiency. Getting access to this privileged information, we are able to then consider the efficiency of a "student", that has to solve the task from scratch…

This repo incorporates GPTQ model information for DeepSeek's Deepseek Coder 33B Instruct. Aider permits you to pair program with LLMs to edit code in your local git repository Start a new challenge or work with an current git repo. The recordsdata offered are tested to work with Transformers. Note: If you are a CTO/VP of Engineering, it'd be nice help to buy copilot subs to your workforce. Open-supply Tools like Composeio additional assist orchestrate these AI-driven workflows across completely different programs convey productiveness improvements. I left The Odin Project and ran to Google, then to AI tools like Gemini, ChatGPT, DeepSeek for assist and then to Youtube. The costs are presently excessive, but organizations like DeepSeek are chopping them down by the day. The implications of this are that more and more powerful AI systems combined with properly crafted data technology eventualities could possibly bootstrap themselves past pure knowledge distributions. DeepSeek Coder is a capable coding model trained on two trillion code and natural language tokens. GPT-4o, Claude 3.5 Sonnet, Claude 3 Opus and DeepSeek Coder V2. DeepSeek Coder makes use of the HuggingFace Tokenizer to implement the Bytelevel-BPE algorithm, with specially designed pre-tokenizers to make sure optimal efficiency. Getting access to this privileged information, we are able to then consider the efficiency of a "student", that has to solve the task from scratch…

Note: It's essential to notice that whereas these models are highly effective, they will generally hallucinate or provide incorrect data, necessitating cautious verification. There are tons of fine options that helps in reducing bugs, decreasing total fatigue in building good code. Nevertheless it conjures up people who don’t simply want to be restricted to analysis to go there. Those that don’t use extra test-time compute do well on language tasks at higher speed and decrease value. China could effectively have sufficient industry veterans and accumulated know-learn how to coach and mentor the following wave of Chinese champions. I’ve beforehand written about the company in this newsletter, noting that it appears to have the type of talent and output that looks in-distribution with major AI builders like OpenAI and Anthropic. You may set up it from the source, use a package supervisor like Yum, Homebrew, apt, ديب سيك and so on., or use a Docker container. How about repeat(), MinMax(), fr, complicated calc() again, auto-fit and auto-fill (when will you even use auto-fill?), and extra. I instructed myself If I may do something this stunning with just these guys, what is going to happen after i add JavaScript?

Note: It's essential to notice that whereas these models are highly effective, they will generally hallucinate or provide incorrect data, necessitating cautious verification. There are tons of fine options that helps in reducing bugs, decreasing total fatigue in building good code. Nevertheless it conjures up people who don’t simply want to be restricted to analysis to go there. Those that don’t use extra test-time compute do well on language tasks at higher speed and decrease value. China could effectively have sufficient industry veterans and accumulated know-learn how to coach and mentor the following wave of Chinese champions. I’ve beforehand written about the company in this newsletter, noting that it appears to have the type of talent and output that looks in-distribution with major AI builders like OpenAI and Anthropic. You may set up it from the source, use a package supervisor like Yum, Homebrew, apt, ديب سيك and so on., or use a Docker container. How about repeat(), MinMax(), fr, complicated calc() again, auto-fit and auto-fill (when will you even use auto-fill?), and extra. I instructed myself If I may do something this stunning with just these guys, what is going to happen after i add JavaScript?

While human oversight and instruction will remain crucial, the power to generate code, automate workflows, and streamline processes promises to speed up product improvement and innovation. It’s not a product. At Middleware, we're dedicated to enhancing developer productiveness our open-supply DORA metrics product helps engineering teams improve effectivity by offering insights into PR reviews, figuring out bottlenecks, and suggesting methods to reinforce workforce performance over 4 important metrics. For example, RL on reasoning could improve over more training steps. DeepSeek-V3 is a basic-purpose model, whereas DeepSeek-R1 focuses on reasoning duties. DeepSeek-R1 is a sophisticated reasoning model, which is on a par with the ChatGPT-o1 model. DeepSeek is the identify of the Chinese startup that created the DeepSeek-V3 and DeepSeek-R1 LLMs, which was based in May 2023 by Liang Wenfeng, an influential figure in the hedge fund and AI industries. But then here comes Calc() and Clamp() (how do you determine how to make use of those?